-

1 условная дифференциальная энтропия

Русско-английский научно-технический словарь Масловского > условная дифференциальная энтропия

-

2 средняя условная дифференциальная энтропия

General subject: average conditional differential entropy (условная дифференциальная энтропия, усредненная по всем исходам другой ситуации; см. Теория передачи информации. Терминология. Вып. 94. М.: Наука, 1979)Универсальный русско-английский словарь > средняя условная дифференциальная энтропия

-

3 условная дифференциальная энтропия

Programming: conditional differential entropy (дифференциальная энтропия, определяемая при известном исходе другой ситуации; см. Теория передачи информации. Терминология. Вып. 94. М.: Наука, 1979)Универсальный русско-английский словарь > условная дифференциальная энтропия

-

4 условная дифференциальная энтропия случайной величины

условная дифференциальная энтропия случайной величины

Дифференциальная энтропия условного распределения вероятностей случайной величины.

[Сборник рекомендуемых терминов. Выпуск 94. Теория передачи информации. Академия наук СССР. Комитет технической терминологии. 1979 г.]Тематики

EN

Русско-английский словарь нормативно-технической терминологии > условная дифференциальная энтропия случайной величины

-

5 условная дифференциальная энтропия случайной последовательности

условная дифференциальная энтропия случайной последовательности

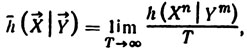

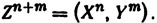

Отнесенная к единице времени условная дифференциальная энтропия отрезка непрерывной (по множеству значений компонент) случайной последовательности при условии заданного соответствующего отрезка другой непрерывной случайной последовательности в пределе при стремлении к бесконечности длины отрезка; ее выражение имеет вид

где и

и  — непрерывные случайные последовательности, a n и m —числа компонент последовательностей

— непрерывные случайные последовательности, a n и m —числа компонент последовательностей  и

и  на отрезке длительности T.

на отрезке длительности T.

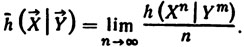

Примечание. Условная дифференциальная энтропия случайной последовательности, отнесенная к одной компоненте, имеет вид

Примечание. Длина отрезка Т может иметь размерность, отличную от времени.

[Сборник рекомендуемых терминов. Выпуск 94. Теория передачи информации. Академия наук СССР. Комитет технической терминологии. 1979 г.]Тематики

EN

Русско-английский словарь нормативно-технической терминологии > условная дифференциальная энтропия случайной последовательности

-

6 условная дифференциальная энтропия случайной величины

General subject: conditional differential random-variable entropy (дифференциальная энтропия условного распределения вероятностей случайной величины; см. Теория передачи информации. Терминология. Вып. 94. М.: Наук)Универсальный русско-английский словарь > условная дифференциальная энтропия случайной величины

-

7 условная дифференциальная энтропия случайной последовательности

General subject: conditional differential random-sequence entropy (отнесенная к единице времени условная дифференциальная энтропия отрезка непрерывной (по множеству значений компонент) случайной последов)Универсальный русско-английский словарь > условная дифференциальная энтропия случайной последовательности

-

8 дифференциальная энтропия условного распределения вероятностей

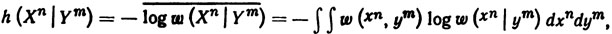

дифференциальная энтропия условного распределения вероятностей

Мера неопределенности условного распределения вероятностей непрерывной случайной величины при условии, что задано значение другой непрерывной случайной величины, усредненная по значениям последней; ее выражение имеет вид

где w(xn, ym)=w(x1,..., xn, y1,..., ym) - совместная плотность распределения вероятностей случайных величин Хn = (Х1,..., Хn) и Ym = (Y1,..., Ym), w(xn|ym)= w(x1,..., xn | y1,..., ym) — условная плотность распределения вероятностей случайной величины Хn при условии, что задано значение уm случайной величины Уm; интегрирование ведется по всему множеству значений xn, ym, случайных величин Xn, Ym.

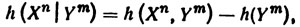

Примечание. Имеет место равенство

где h(Xn, Ym) —дифференциальная энтропия случайной величины

.

.

[Сборник рекомендуемых терминов. Выпуск 94. Теория передачи информации. Академия наук СССР. Комитет технической терминологии. 1979 г.]Тематики

EN

Русско-английский словарь нормативно-технической терминологии > дифференциальная энтропия условного распределения вероятностей

-

9 дифференциальная энтропия условного распределения вероятностей

General subject: differential conditional-probability-distribution entropy (мера неопределенности условного распределения вероятностей непрерывной случайной величины при условии, что задано значение друго)Универсальный русско-английский словарь > дифференциальная энтропия условного распределения вероятностей

См. также в других словарях:

Differential entropy — (also referred to as continuous entropy) is a concept in information theory that extends the idea of (Shannon) entropy, a measure of average surprisal of a random variable, to continuous probability distributions. Contents 1 Definition 2… … Wikipedia

Entropy (information theory) — In information theory, entropy is a measure of the uncertainty associated with a random variable. The term by itself in this context usually refers to the Shannon entropy, which quantifies, in the sense of an expected value, the information… … Wikipedia

Maximum entropy probability distribution — In statistics and information theory, a maximum entropy probability distribution is a probability distribution whose entropy is at least as great as that of all other members of a specified class of distributions. According to the principle of… … Wikipedia

Information theory — Not to be confused with Information science. Information theory is a branch of applied mathematics and electrical engineering involving the quantification of information. Information theory was developed by Claude E. Shannon to find fundamental… … Wikipedia

Quantities of information — A simple information diagram illustrating the relationships among some of Shannon s basic quantities of information. The mathematical theory of information is based on probability theory and statistics, and measures information with several… … Wikipedia

Kullback–Leibler divergence — In probability theory and information theory, the Kullback–Leibler divergence[1][2][3] (also information divergence, information gain, relative entropy, or KLIC) is a non symmetric measure of the difference between two probability distributions P … Wikipedia

Multivariate normal distribution — MVN redirects here. For the airport with that IATA code, see Mount Vernon Airport. Probability density function Many samples from a multivariate (bivariate) Gaussian distribution centered at (1,3) with a standard deviation of 3 in roughly the… … Wikipedia

Asymptotic equipartition property — In information theory the asymptotic equipartition property (AEP) is a general property of the output samples of a stochastic source. It is fundamental to the concept of typical set used in theories of compression.Roughly speaking, the theorem… … Wikipedia

Cauchy distribution — Not to be confused with Lorenz curve. Cauchy–Lorentz Probability density function The purple curve is the standard Cauchy distribution Cumulative distribution function … Wikipedia

Information theory and measure theory — Measures in information theory = Many of the formulas in information theory have separate versions for continuous and discrete cases, i.e. integrals for the continuous case and sums for the discrete case. These versions can often be generalized… … Wikipedia

Rate–distortion theory — is a major branch of information theory which provides the theoretical foundations for lossy data compression; it addresses the problem of determining the minimal amount of entropy (or information) R that should be communicated over a channel, so … Wikipedia